Orthogonal

Glossary of Terms

This is a glossary of some of the terminology used in the notes on the physics of Orthogonal. Although the notes generally link to Wikipedia for widely used mathematical and scientific terms, in some cases

a shorter and less technical explanation is provided here instead.

This is not a glossary of terms used in the novel. If you’re wondering how many scants there are in a stride or how many scrags in a heft, there’s an appendix for that at the back

of the book.

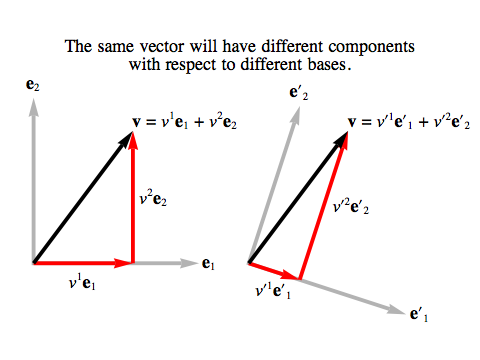

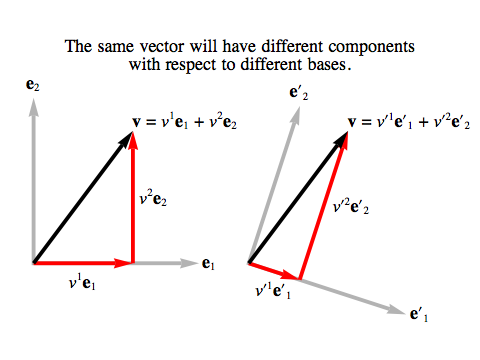

Basis. A basis for an n-dimensional vector space V is a set of n vectors, {e1, e2, ... en}, such that any vector

v in V can be written as a sum of multiples of the basis vectors:

v = v1 e1 + v2 e2 + ... + vn en

The numbers v1, v2, ... vn are called the coordinates or components of the vector v with respect to the basis.

The same vector will have different components with respect to different bases.

In these notes, we are mostly concerned with four-dimensional vector spaces, and instead of numbering the basis vectors 1, 2, 3, 4 we’ll often label them with letters:

ex, ey, ez and et.

Most bases we use will be orthonormal: the “normal” parts means all the vectors have length 1, and the “ortho” part means they are all mutually perpendicular.

As an example, in the vector space of four-tuples of real numbers, R4, with the standard dot product, one orthonormal basis is the standard basis:

ex = (1, 0, 0, 0)

ey = (0, 1, 0, 0)

ez = (0, 0, 1, 0)

et = (0, 0, 0, 1)

Determinant. The determinant of an n×n matrix A, written det(A), gives the factor by which the linear function

corresponding to A changes the volume of an n-cube. The determinant will be zero if A squashes the n-cube down to less than n dimensions. The determinant will be negative if A reverses the orientation of the n-cube.

For example, in the case of two dimensions, the points {(0,0), (1,0), (1,1), (0,1)} give the vertices of a square of size 1, listed in counter-clockwise order. If we apply A to these points, we

get four new points; the absolute value of det(A) will be the area of the parallelogram whose vertices are those points, and det(A) will be negative if the new points end up in clockwise order.

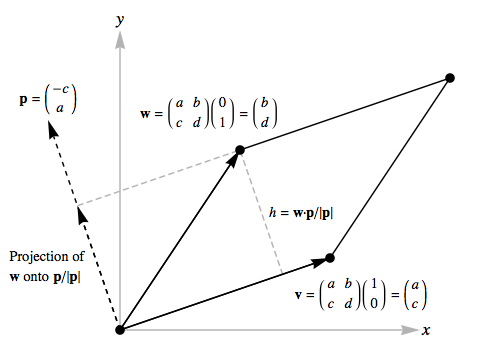

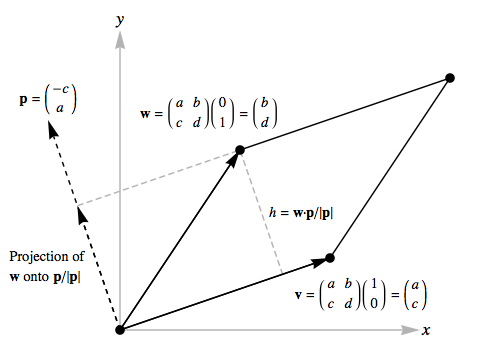

Suppose A is the 2×2 matrix:

Two edges of the parallelogram will be the vectors v=(a, c) and w=(b, d).

Since

the vector p=(–c, a) is perpendicular to v, the “height” h of the parallelogram – if the edge

v is taken as the “base” – is the length of the projection of w onto the unit vector p/|p|:

h = w · p/|p|

Here h will be negative if the tip of w falls below the base v, i.e. if the original square has been mapped onto the parallelogram clockwise.

The area of the parallelogram is the height times the length of the base, and:

det(A) = h |v| = h |p| = w · p = ad–bc

In general, to find the determinant of an n×n matrix A, you list every possible permutation of the numbers

{1,2,...n}. Associated with each permutation is a number known as its sign, which is +1 if the permutation involves an even number of swaps from the original

order, and –1 if it involves an odd number of swaps. For each permutation, you multiply its sign by n matrix components, one from each row,

with the column number determined by the corresponding number in the permutation. The sum of all these products is the determinant:

det(A) = ∑p a permutation of {1,2,...n} sign(p) A1p(1) A2p(2) ... Anp(n)

For our example of a 2×2 matrix, the permutations of {1,2} are just {1,2} itself, with sign 1, and {2,1}, with sign –1, so the determinant is:

det(A) = A11 A22 – A12 A21 = ad–bc

One very useful special case is the determinant of a diagonal matrix, D, a matrix whose components are 0 except on the diagonal. In this case, the only permutation whose product

sticks to the diagonal is the one that leaves all the numbers {1,2,...n} in their original order, so we have:

det(D) = D11 D22 ... Dnn

Dot product. In four-dimensional Riemannian geometry, the dot product of two vectors v and w, written as v · w, is given by:

v · w = vx wx + vy wy + vz wz + vt wt

where vx etc. and wx etc. are the components of the vectors in an orthonormal basis.

This generalises to the n-dimensional case in the obvious way. Using the Einstein summation convention:

v · w = vi wi

The dot product of two vectors is related to their lengths and the angle between them, θ:

v · w = |v| |w| cos θ

The definition we’ve given here, which starts from an orthonormal basis, is appropriate for physical applications,

where we take it for granted that we can measure the length of a vector, and determine on physical grounds whether or not it’s perpendicular

to another vector.

In pure mathematics, though, we need to start with a dot product in order to define an orthonormal basis;

it’s the fact that ex · ey = 0 that tells you ex and ey are orthogonal, and ex · ex = 1 tells you

ex has a length of 1. So for example, when dealing with the vector space of four-tuples of real numbers, R4, we would generally use the standard dot product, which we define as:

(vx, vy, vz, vt) · (wx, wy, wz, wt)

= vx wx + vy wy + vz wz + vt wt

which leads to the standard basis for R4 being orthonormal.

Details in the notes on Dot products.

Dual. If V is a real vector space, then a dual vector on V is a linear function f from V to the real numbers R.

The dual space to V, written V*, is the set of all dual vectors on V, made into a vector space in its own right.

If {e1, e2, ... en} is a basis for V, then the dual basis for V*, {e1, e2, ... en}

is a set of functions on V that satisfies the condition:

ei(ej) = δij

where δij, known as the Kronecker delta symbol,

is 1 if i=j and 0 if i≠j.

Details in the notes on Dual vectors.

Einstein summation convention. The Einstein summation convention is a convenient short-hand for writing the sums of terms in an expression involving

the components of vectors and matrices. Using the summation convention, whenever the same index variable appears twice in a product, the implication is

that the product is actually a sum over all applicable values of that index. So if we write:

Mij vj

where vj indicates a component of an n-dimensional vector v , the index j appears twice and the summation convention tells us to expand this as:

Mij vj = Mi1 v1 + Mi2 v2 + ... + Min vn

Note that when we write a term like vx wx, the repeated “x” here is labelling a particular component of each of these vectors, so the summation

convention does not apply. Whenever x, y, z or t appear as superscripts or subscripts, they are simply meant as labels corresponding to the traditional coordinate axes,

not as index variables that need to be summed over.

Euclidean group. The Euclidean group E(n) is the group of all symmetries of n-dimensional Euclidean space, including translations, rotations and reflections.

In these notes, we are mostly concerned with E(4), the symmetries of 4-dimensional Euclidean space.

The subgroup of the Euclidean group that includes only translations and rotations, ruling out reflections, is known as the special Euclidean group, SE(n).

Details in the notes on Symmetries.

Euclidean universe. The term “Euclidean universe” is used in these notes to describe an idealised four-dimensional Riemannian universe

that is infinite and flat, and hence obeys the postulates of Euclidean geometry.

In our own universe, the geometry of small regions of space far from any strong gravitational fields

is very close to Euclidean, but the geometry of space-time in such regions isn’t, because intervals in time do not obey Pythagoras’s Theorem.

In our universe, nearly-flat space-time is approximated by Minkowski spacetime.

NB: In the physics literature, the term “Euclidean” is frequently applied to the versions of physical laws

produced by Wick rotation. These are not the laws applicable in the universe of Orthogonal.

Group. In mathematics, a group is a set G along with an “operation”: a way of combining two elements of G to yield a third element.

This group operation is sometimes called “multiplication”, and the element we get by combining the elements g and h is usually written gh.

It must

obey similar rules to the ordinary multiplication of positive numbers, but it need not be commutative: that is, it’s possible that gh≠hg.

- Group “multiplication” must be associative: (gh)k = g(hk).

- The group must contain an identity, usually called e (but sometimes called 1 or I for matrix groups) with

ge = eg = g.

- Every element g of the group must have an inverse, g–1, which satisfies gg–1 = g–1g = e.

Some examples of commutative groups:

- The positive real numbers, R+, with ordinary multiplication as the group operation.

- The vector space of n-tuples of real numbers, Rn, with vector addition as the group operation and the zero vector as the identity.

Some examples of non-commutative groups:

- The set of n×n matrices of real numbers with non-zero determinant, written GL(n),

with matrix multiplication as the group operation.

- The set of n×n matrices of real numbers whose transpose is equal to their inverse, written O(n),

with matrix multiplication as the group operation.

Identity matrix. The n×n identity matrix, written In, is the matrix whose components are all zero except along the diagonal, where

they are 1. If n is clear from the context, we will just write I rather than In.

For example, I4 is:

| 1 | 0 | 0 | 0 |

| 0 | 1 | 0 | 0 |

| 0 | 0 | 1 | 0 |

| 0 | 0 | 0 | 1 |

Multiplication with the identity matrix leaves any other matrix unchanged; if A is any n×n matrix, then:

A In = In A = A

Inverse matrix. The inverse of an n×n matrix A is the n×n matrix, written A–1 such that:

AA–1 = A–1A = In

where In is the n×n identity matrix. The inverse of A will exist if and only if the determinant of A is not zero.

The inverse of the product of matrices is the product, in reverse order, of the inverses of the individual matrices:

(AB)–1 = B–1 A–1

Linear function. A linear function is a function from one vector space to another (perhaps the same one), such that it makes no

difference whether you apply the function before or after adding vectors or multiplying them by numbers. That is, the function f is linear if:

f(v + w) = f(v) + f(w)

f(s v) = s f(v)

If we have a linear function f from an

m-dimensional vector space V to an n-dimensional vector space W,

and we pick bases {e1, e2, ... em} for V and

{e'1, e'2, ... e'n} for W,

we can describe f in terms of an n×m matrix M. The matrix component Mij is the ith component, with respect to our basis for W,

of the vector f(ej), and using the Einstein summation convention:

f(v) = f(vj ej) = vj f(ej) = Mij vj e'i

Lorentzian. The adjective “Lorentzian” is used in these notes to distinguish the physics that applies in our own universe from that which

applies in the universe of Orthogonal.

Details in the notes on Lorentzian and Riemannian Geometry.

[Why “Lorentzian”?

Hendrik Antoon Lorentz was a Dutch physicist who developed the mathematical framework that Einstein

employed in special relativity. The term “Lorentzian” is used to refer to a geometry where one of the dimensions is distinguished from the others

as a time dimension. In contrast, in a Riemannian space, all dimensions are fundamentally the same.]

Matrix. An n×m matrix M is a grid or table of numbers (in these notes, either real or complex numbers), with n rows of m numbers.

The individual numbers are known as the components of the matrix; we will write Mij for the jth number in the ith row of the matrix M.

If A is an n×m matrix and B is an m×p matrix, we can multiply A by B to form an n×p matrix C=AB, with:

Cij = Ai1 B1j + Ai2 B2j + ... + Aim Bmj

or using the Einstein summation convention, simply:

Cij = Aik Bkj

Note that in order for the matrix product AB to exist, the size of the rows of A must equal the size of the columns of B. If A and B are square matrices

of the same size then both AB and BA will exist, but they will not generally be equal.

We can treat an n×m matrix M as a linear function from an

m-dimensional vector space V to an n-dimensional vector space W,

by thinking of any vector v in V as an m×1 matrix, consisting of a single column of numbers which are the m components of v in some chosen basis.

Then the matrix product Mv gives us an n×1 matrix, which we can think of as the n components of a vector in W in some chosen basis.

So M, along with a choice of bases in V and W, gives us a linear function f from V to W.

If the basis for V is {e1, e2, ... em}

and the basis for W is {e'1, e'2, ... e'n}, we can write, using the Einstein summation convention:

f(v) = f(vj ej) = vj f(ej) = Mij vj e'i

Null Vector. A vector that points along the world line traced out by light in ordinary space-time.

Details in the notes on Lorentzian and Riemannian Geometry.

O(4). The set of 4×4 matrices of real numbers whose transpose

is equal to their inverse is known as O(4).

It is a group, with matrix multiplication as the group operation, and another name for it is the 4-dimensional orthogonal group.

If these matrices are treated as linear functions on four-space, they describe all rotations and reflections in that space.

Details in the notes on Symmetries.

Riemannian. The adjective “Riemannian” is used in these notes to distinguish the physics that applies in the universe of Orthogonal from that which

applies in our own universe.

Details in the notes on Lorentzian and Riemannian Geometry.

[Why “Riemannian”?

Georg Friedrich Bernhard Riemann was one of the pioneers of the field of differential geometry,

a subject that generalises Euclidean geometry to apply to curved surfaces and spaces. Within that field, mathematicians use the term “Riemannian” to describe

geometries that we’d normally think of as kinds of space,

whether flat or curved, where all the dimensions are treated as fundamentally the same. In contrast, in the Lorentzian space-time of our own universe, one of the dimensions, time, is singled out for

special treatment.]

Riemannian Scalar Wave (RSW) Equation. This is the equation for a scalar wave A moving through the vacuum of the Riemannian universe:

| ∂x2A + ∂y2A

+ ∂z2A + ∂t2A + ωm2 A |

= |

0 |

(RSW) |

Details in the notes on Scalar Waves.

Riemannian Vector Wave (RVW) Equations. These are the equations for a vector wave A moving through the vacuum of the Riemannian universe:

| ∂x2A + ∂y2A

+ ∂z2A + ∂t2A + ωm2 A |

= |

0 |

(RVW) |

| ∂x Ax + ∂y Ay + ∂z Az + ∂t At |

= |

0 |

(Transverse) |

Details in the notes on Vector Waves.

We can extend the first equation to include a source term:

| ∂x2A + ∂y2A

+ ∂z2A + ∂t2A + ωm2 A + j |

= |

0 |

(RVWS) |

Details in the notes on Riemannian Electromagnetism.

Scalar. A number that all observers will agree on, because it does not rely on their choice of coordinate system.

For example, air pressure is a scalar, but “the component of the electric field in the x direction” isn’t.

SO(4). The set of 4×4 matrices of real numbers whose transpose is equal to their inverse

and whose determinant is 1 is known as SO(4).

It is a group, with matrix multiplication as the group operation, and another name for it is the 4-dimensional special orthogonal group.

If these matrices are treated as linear functions on four-space, they describe all rotations in that space.

Details in the notes on Symmetries.

Subspace. A subspace of a vector space V is a subset of V that is itself a vector space.

For example, in a vector space of two or more dimensions any line that passes through the origin will be a 1-dimensional subspace of the original vector space.

But not all subsets of a vector space are subspaces:

the sphere of all vectors of length 1 does not form a subspace because you can add two vectors of length 1 to get another vector

of a different length, outside the chosen subset.

Transpose. The transpose of an n×m matrix A is the m×n matrix, written AT, whose column are the rows of the original matrix.

The transpose of the product of matrices is the product, in reverse order, of the transposes of the individual matrices:

(AB)T = BT AT

Vector space. A vector space V is a set of objects that we can add to each other, and multiply by numbers of some kind (in these notes, the numbers will either be real numbers or complex

numbers).

- Every vector space includes a zero vector, 0, that can be added to any vector without changing it: v+0 = v.

- Every vector v has an opposite (or additive inverse), –v, such that v+(–v) = 0.

- Multiplication distributes over addition: s (v+w) = s v + s w and (r + s) v = r v + s v.

- We can also define subtraction of one vector from another: v–w = v+(–w).

- A vector space must be closed under all these operations. If we’ve chosen a set V and defined these operations on it, they must never yield results that lie outside V.

For example, let V be the set of all four-tuples of real numbers, R4. Then we define addition, multiplication, the zero vector, and the opposite of a vector in the obvious ways:

- (x, y, z, t) + (a, b, c, d) = (x+a, y+b, z+c, t+d)

- s (x, y, z, t) = (sx, sy, sz, st)

- 0 = (0, 0, 0, 0)

- –(x, y, z, t) = (–x, –y, –z, –t)

Wick rotation. Wick rotation is a procedure employed in modern physics in order to analyse certain problems in our own universe.

In Wick rotation,

time t is replaced by an imaginary number iτ and

nothing else is changed.

In contrast to this, the physics of the novel Orthogonal involves changing the signs of some parameters in various equations,

leading to very different results than Wick rotation.

For example, the ordinary, Lorentzian

Klein-Gordon equation in natural units is:

| ∂x2ψ + ∂y2ψ + ∂z2ψ – ∂t2ψ – m2 ψ |

= |

0 |

(1) |

(You will often see this written as ∂μ∂μψ + m2 ψ = 0,

summing over μ with the Einstein summation convention, but the positive

sign for the m2 ψ term comes about because the author is using the (+–––)

signature

for the

Minkowski

metric, i.e.

∂μ∂μψ =

∂t2ψ

– ∂x2ψ

– ∂y2ψ

– ∂z2ψ.)

The Wick-rotated version, where t → iτ, is:

| ∂x2ψ + ∂y2ψ + ∂z2ψ + ∂τ2ψ – m2 ψ |

= |

0 |

(2) |

The Riemannian version that applies in the universe of Orthogonal is similar, but the sign of the m2 term is changed:

| ∂x2ψ + ∂y2ψ + ∂z2ψ + ∂t2ψ + m2 ψ |

= |

0 |

(3) |

The ordinary Klein-Gordon equation (1) has bounded plane-wave solutions:

| ψ(x) |

= |

sin(kx x + ky y + kz z – kt t) |

(4) |

where k is any timelike vector with (kx)2 + (ky)2 + (kz)2 – (kt)2 = –m2.

The version (3) that applies in the universe of Orthogonal also has bounded plane-wave solutions:

where |k|2 = m2.

But all solutions of the Wick-rotated version, (2), grow exponentially in at least one direction.

If we substituted the solution (5) into equation (2) it would give |k|2 = –m2, which can only be true if at least one component of k

is imaginary – and the sine of an imaginary quantity grows exponentially.

Orthogonal / Glossary / created Wednesday, 6 April 2011

Copyright © Greg Egan, 2011. All rights reserved.